![]()

How do AI detectors work?

How can you tell something's AI-generated? When it comes to writing, there are common tells: the excessive use of em dashes, sentences that are too rhythmically clean, and a general smoothness that feels overly engineered.

It's hardly a perfect science, though, and most humans' AI detection skills are based on vibes.

If humans are just relying on instinct, what are AI detectors relying on? Here, Zapier shares everything you need to know about how AI detectors work.

What is an AI detector?

An AI detector is a tool that analyzes content like text, images, or videos, and estimates the likelihood that it was generated by an AI model. Instead of giving a definitive yes-or-no answer, most AI detectors will give you:

- A probability score (for example, "74% likely AI-generated")

- A confidence rating

- Highlighted passages that appear machine-written, if it's text

Their goal isn't to "catch" AI with certainty, but to flag content that statistically resembles AI-generated patterns.

How do AI detectors work?

The specifics of how AI detectors work vary depending on what type of content they're analyzing. For simplicity, this article will focus on AI text detectors. But other types—like AI image detectors—work similarly.

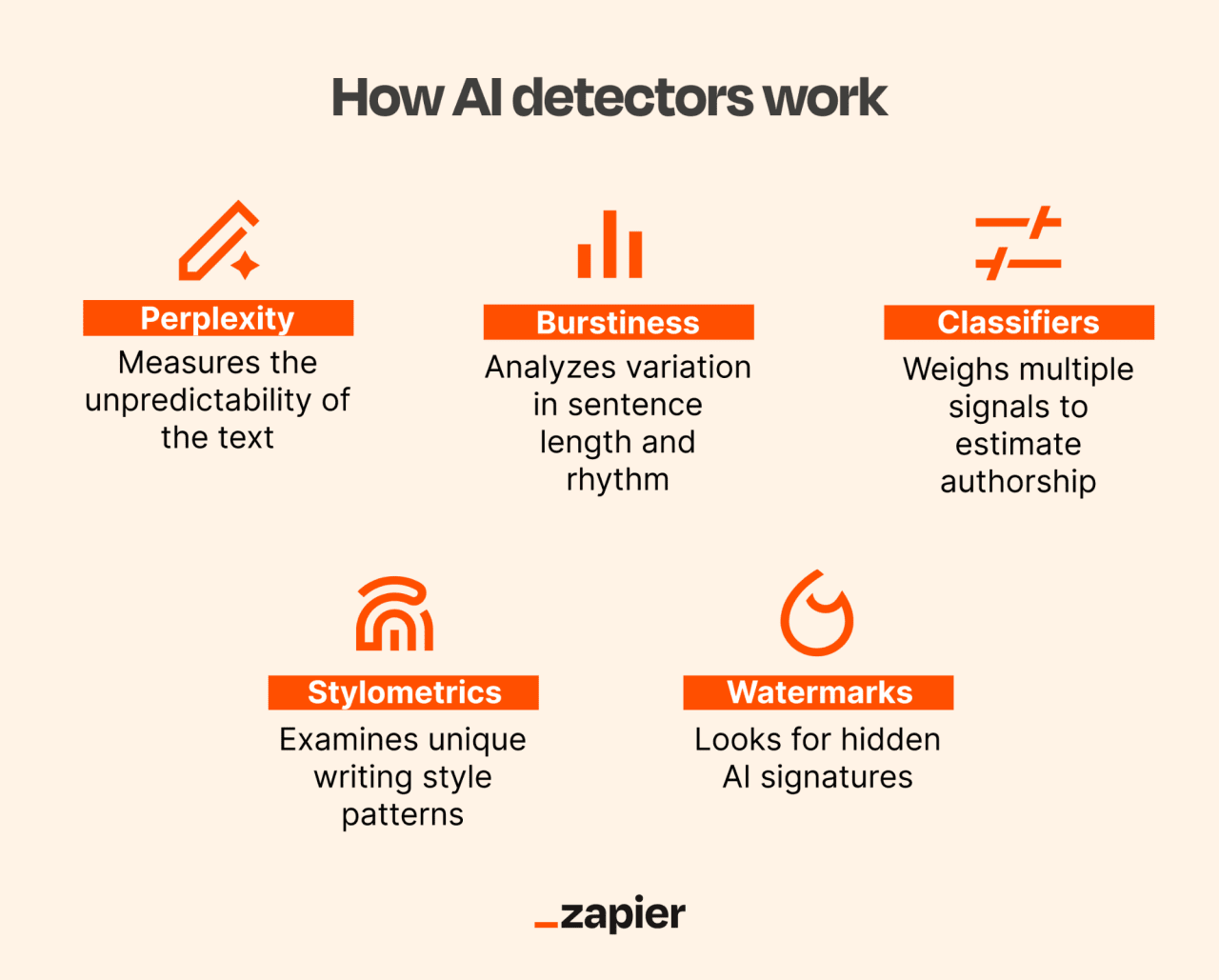

Large language models (LLMs) generate text by predicting the most likely next word based on probability. It's more nuanced than that, but that's the idea. AI detectors reverse-engineer that idea: They look at a finished piece of writing and measure how closely it matches those probability patterns. Here are the main techniques they use.

1. Perplexity

Perplexity (not to be confused with the AI-powered search engine) measures how unpredictable a piece of text is to a language model. The lower the perplexity, the more the wording follows patterns the model expects to see.

AI-generated text often has lower perplexity because it's built from highly common word sequences. It gravitates toward phrasing that's safe, common, and structurally sound. Which is kind of the point. AI models are trained to predict the most probable next word, not the most chaotic or idiosyncratic—just the most likely.

Human writing, on the other hand, tends to raise the perplexity score because it's usually less predictable. Unless you have a ruthless editor who'll set you straight, humans use words that technically work, even if they're not the exact right ones. They go off on tangents and litter their work with comma splices because those pauses just feel right to them.

2. Burstiness

Burstiness looks at sentence length distribution and structural variation to identify patterns that appear overly consistent.

Humans rarely write in perfect cadence. They mix short sentences with longer ones, occasionally go on tangents, and vary pacing without thinking about it. Earlier AI models, by contrast, tended to produce writing that felt evenly spaced and neatly balanced. Nothing was outright bad, just … suspiciously consistent.

That "too rhythmic" quality is often what sets off our internal AI radar. AI detectors try to quantify that instinct by measuring variation in sentence length, punctuation, and structure. If the tempo barely changes from start to finish, that uniformity can raise a flag.

3. Classifiers

A classifier is a machine learning system trained to categorize text as likely human- or AI-generated. Unlike perplexity or burstiness, which are individual signals, a classifier looks at many features at once and weighs them together.

Developers train their LLMs on large datasets of labeled human and AI text. Through that training, classifiers learn statistical patterns that tend to separate the two categories. Those patterns can include predictability scores, sentence variation, word frequency distributions, and other structural signals.

When you paste new text into an AI detector, the classifier evaluates how multiple signals interact and then produces a probability score. The final output reflects whether the writing, on average, more closely resembles patterns associated with AI-generated text or human-written text.

4. Stylometric analysis

Stylometric analysis is the study of writing style, including vocabulary richness, repetition, and sentence complexity. Think of it as your linguistic fingerprint.

The idea is that humans tend to develop quirks over time. For example, the author Fredrik Backman typically writes stories with a sort of progressive repetition that's hard to describe, but is uniquely him. It's what makes his writing so easily distinguishable.

AI writing, by contrast, often clusters around high-probability patterns, generating phrasing that reflects widely represented patterns rather than highly idiosyncratic ones. That's also what makes much of AI writing feel technically solid but vaguely same-y.

5. Watermark detection

Watermark detection is a way of identifying AI-generated text by looking for a hidden signature baked into the writing itself.

Not all AI models use watermarking, and there isn't one standard way to do it. But when watermarking is enabled, the model slightly nudges its word choices in a consistent, trackable way. The shifts are subtle enough that you wouldn't notice anything while reading, but an AI detector that knows what to look for can spot the pattern.

In theory, that makes AI-generated content easier to trace. In reality, even light editing or paraphrasing can blur or erase the signal. So while watermarking sounds like a clean solution, it's not foolproof.

How accurate are AI detectors?

AI detectors are probabilistic tools, not lie detectors. A detection score reflects how closely writing matches certain patterns. It doesn't prove who or what actually wrote the text.

Here's why accuracy gets complicated.

- False positives happen. Some human writing naturally resembles AI-generated text. If you refuse to give up the em dash and sprinkle them liberally throughout your writing, an AI detector may flag it as machine-written, even if it wasn't.

- False negatives happen. AI models are improving at an alarming speed and learning to mimic human variability more effectively. Humans, for their part, are learning to refine their AI prompts to inject human signals—for example, telling their AI writing generator to mix up sentence patterns or intentionally include errors. As AI writing and human prompting become more nuanced, detection becomes harder.

- Hybrid content blurs the line. Most writing today isn't purely human or AI. AI detectors struggle in this gray area because the final text contains both human and machine signals.

- Results vary across tools. Different AI detectors use different training data and different models. The same paragraph can receive dramatically different scores depending on the platform. That inconsistency makes it risky to rely on a single detection result for high-stakes decisions.

The bottom line on AI detectors

We're no longer living in a binary world of purely human or purely AI-generated writing. A lot of content now sits somewhere in between. A draft may start with AI, a human reshapes it, AI tightens a paragraph, a human adds a lived example—the lines blur. And AI detectors have to make probabilistic guesses in that gray space.

This story was produced by Zapier and reviewed and distributed by Stacker.

(0) comments

Welcome to the discussion.

Log In

Keep it Clean. Please avoid obscene, vulgar, lewd, racist or sexually-oriented language.

PLEASE TURN OFF YOUR CAPS LOCK.

Don't Threaten. Threats of harming another person will not be tolerated.

Be Truthful. Don't knowingly lie about anyone or anything.

Be Nice. No racism, sexism or any sort of -ism that is degrading to another person.

Be Proactive. Use the 'Report' link on each comment to let us know of abusive posts.

Share with Us. We'd love to hear eyewitness accounts, the history behind an article.