The AI trust gap: 42% would ask AI before calling a lawyer

When legal trouble surfaces, many Americans don't start with a law office. They start with a search bar.

A new national survey of 1,000 U.S. adults on “The AI Lawyer Trust Gap” finds that 42% of Americans would use AI before contacting a lawyer if they had a legal issue. The appeal is obvious. AI feels fast, private, and free.

But speed does not equal certainty. Beneath that initial curiosity lies a clear boundary: Americans are carefully experimenting with AI for legal questions, not legal decisions.

In this article, Kolmogorov Law breaks down the findings on how Americans view AI in legal matters.

Key Findings

- 42% would use AI before contacting a lawyer.

- 42% trust AI to help them prepare questions for a lawyer.

- 24% don't trust AI with any legal tasks.

- 45% are comfortable sharing sensitive personal details with AI.

- 51% would be fine with their lawyer using AI.

- 60% say lawyers should always disclose when AI is used.

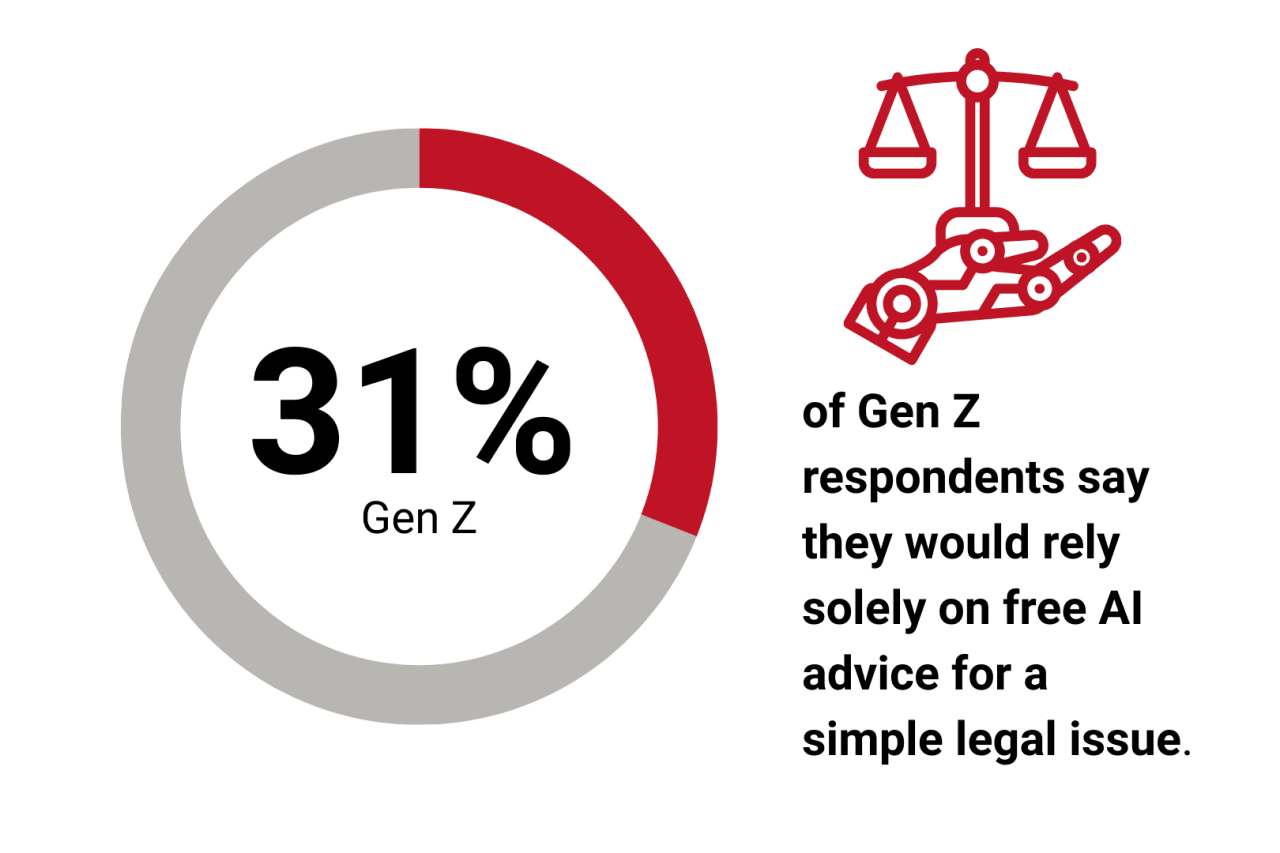

- Only 31% of Gen Zers would rely solely on free AI advice for a simple legal need.

AI Is Becoming the First Stop

AI is emerging as a legal prescreening tool.

Nearly half of Americans say they would consult AI before calling an attorney. Among men, that number rises to 48%, compared to 37% among women. Among households earning more than $150,000, 36% would turn to a chatbot if a legal issue felt urgent.

This is not a rejection of lawyers but a continuation of digital habits. For years, Americans have searched for medical symptoms, employment rights, and tax questions before speaking to a professional. AI condenses that process into a conversational format.

In high-stress moments such as a dispute with a landlord, an unexpected letter, or a workplace conflict involving wrongful termination, harassment, or unpaid wages, instant answers feel stabilizing. AI offers immediacy without scheduling delays or hourly fees. It creates a sense of control at a time when control feels scarce.

Yet immediacy carries risk. AI systems are known to generate confident but incorrect responses. In legal matters, misinformation can carry consequences.

AI as a Prep Tool, Not a Substitute

The second 42% reveals where Americans draw the line.

While nearly half trust AI to help them prepare questions for a lawyer, preparation is not the same as decision-making.

People appear comfortable using AI to clarify terminology, outline possible next steps, or organize their thoughts before a consultation, especially when navigating contract disagreements or partnership conflicts. It functions as a briefing assistant, allowing someone to walk into a meeting informed rather than overwhelmed.

This signals a shift in consumer behavior. Clients want to show up prepared and use billable time efficiently. So, AI becomes a cost-control and confidence-building tool.

For most, however, it does not replace professional authority. Many Americans start with AI, but legal decisions still require interpretation and accountability from a licensed attorney.

Distrust Runs Deep for a Meaningful Minority

For 24% of Americans, the boundary is firm. They do not trust AI with any legal tasks.

That skepticism intensifies among lower-income households. Nearly 29% of those earning under $50,000 reject AI for legal use entirely, compared to just 8% among those earning $150,000 or more.

The income gap suggests confidence plays a role. Higher earners may feel more comfortable verifying information or absorbing potential mistakes. Those with fewer financial buffers may perceive greater risk. Legal decisions can alter finances, housing, employment, or lead to complex civil disputes that require formal litigation. When the stakes feel permanent, experimentation feels risky.

This hesitation supports a simple principle: Lawyers are licensed, regulated, and accountable under strict professional standards. AI tools are not. If a lawyer gives poor advice, there are ethical standards and disciplinary mechanisms in place. With AI, responsibility can feel unclear. That difference matters.

AI Feels Private, Sometimes More Than Lawyers

If distrust defines one segment of the public, comfort defines another.

Nearly 45% of Americans say they are comfortable sharing sensitive personal details with an AI chatbot to receive legal help. Among parents, that number rises to 58%.

Legal issues often carry embarrassment or vulnerability. Divorce, debt, disputes, and family conflicts can feel difficult to discuss face-to-face. A chatbot feels emotionally neutral. It does not react or judge.

Some respondents even perceive AI as less biased. Fifteen percent of men say they trust AI more than most lawyers because it feels less biased, compared with 6% of women.

The distinction is subtle but important. Many Americans may not trust AI as an authority, but they trust it as a confidant. That emotional dynamic is reshaping how legal conversations begin.

Efficiency Is Welcome. Secrecy Is Not.

Americans are not broadly opposed to AI in legal practice.

A slim majority, 51%, say they would be comfortable with their lawyer using AI to assist in their case. Efficiency and modernization are viewed positively.

At the same time, 60% believe lawyers should always disclose when AI is involved.

This pairing defines the trust gap. The public is not anti-AI, but anti-ambiguity.

If AI is used, clients want transparency. They want to know who reviewed the output and who is responsible for it. Disclosure reinforces that accountability remains human, even when technology assists.

Without transparency, confidence declines.

Even Gen Z Isn't All In

Gen Z is often described as digitally fearless. The data suggests nuance.

Only 31% say they would rely solely on free AI advice for a simple legal issue. Even the generation most comfortable with technology limits its authority when consequences feel real.

Growing up with digital tools has also meant exposure to misinformation and algorithmic bias. This familiarity appears to produce caution rather than blind trust.

The trust gap is situational. When legal stakes rise, human accountability carries weight.

The Bigger Picture: A Negotiated Future

The AI lawyer debate reflects recalibration rather than conflict. Americans are using AI to orient themselves. They are preparing questions. They are testing ideas. They are weighing convenience against risk.

They are also signaling that final responsibility should remain with humans. AI may become a permanent part of the legal workflow. Public sentiment indicates openness to that reality under clear conditions: transparency, oversight, and accountability. The trust gap reflects boundary-setting. Those boundaries are likely to shape how law and technology evolve together.

Methodology

This survey was conducted nationally among 1,000 U.S. adults on Jan. 29 via Pollfish to measure attitudes toward using AI for legal guidance. Respondents were asked about usage behavior, trust levels, emotional comfort, and expectations around human oversight in legal matters. Percentages reflect aggregated responses.

This story was produced by Kolmogorov Law and reviewed and distributed by Stacker.

(0) comments

Welcome to the discussion.

Log In

Keep it Clean. Please avoid obscene, vulgar, lewd, racist or sexually-oriented language.

PLEASE TURN OFF YOUR CAPS LOCK.

Don't Threaten. Threats of harming another person will not be tolerated.

Be Truthful. Don't knowingly lie about anyone or anything.

Be Nice. No racism, sexism or any sort of -ism that is degrading to another person.

Be Proactive. Use the 'Report' link on each comment to let us know of abusive posts.

Share with Us. We'd love to hear eyewitness accounts, the history behind an article.